Contra Kahan on motivated reasoning

Facts don't change minds? Identity-protective cognition and belief polarization turn out to be much rarer than we thought

What good is the truth?

Of course, we must truly believe that our bodies need water in order to stay alive. Figure out which foods are poisonous in order to avoid death. And if we falsely believe that we are performing well at our job, we might get fired.

Notice the pattern: If I base decisions on statement X, then it’s important to me that X is true. If I break a dear friendship because that friend turns out to be homophobic, it is important for the rightness of that decision that he actually is. If I move from my native village on the coast because I estimate I can’t continue living there long-term due to sea-level rise, the sea level must actually rise that much, otherwise I have made that sacrifice for nothing.

But how often does a typical factual belief actually function as a “node” in a decision network like that?

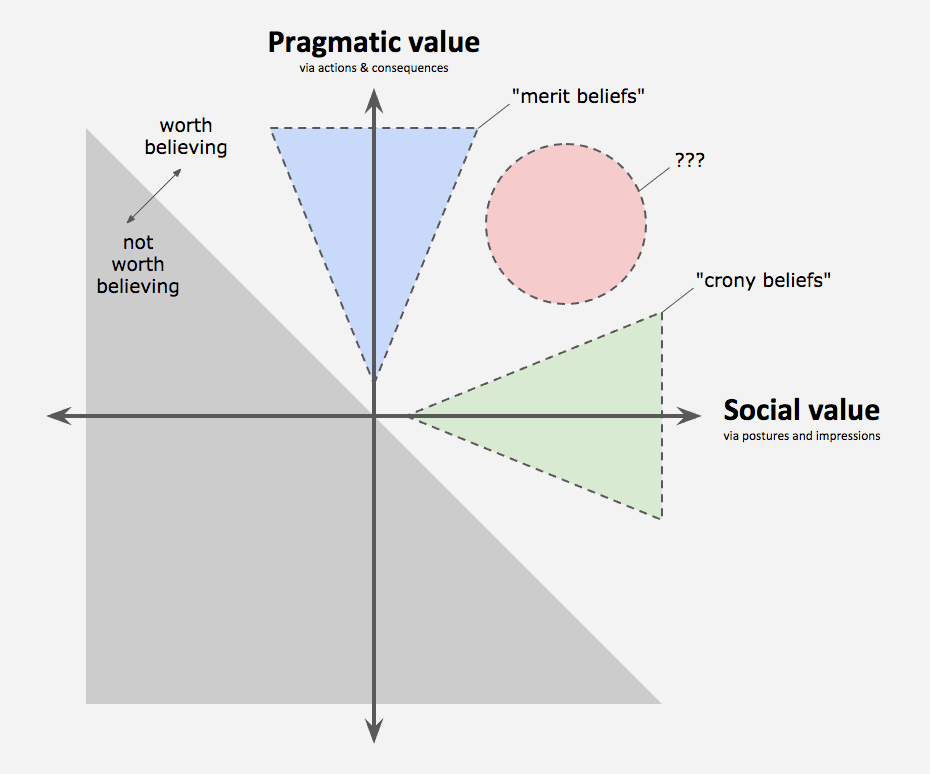

According to Kevin Simler – who co-wrote The Elephant in the Brain with Robin Hanson – the answer is ‘almost never’. Most of our beliefs are what he calls “crony beliefs”. They are beliefs the truth or falsity of which doesn’t really matter for you because you hardly base any choices on them anyway.

Psychologist Paul Bloom (whom you might know from Against Empathy) illustrates:

If I have the wrong theory of how to make scrambled eggs, they will come out too dry; if I have the wrong everyday morality, I will hurt those I love. But suppose I think that the leader of the opposing party has sex with pigs, or has thoroughly botched the arms deal with Iran. Unless I’m a member of a tiny powerful community, my beliefs have no effect on the world. This is certainly true as well for my views about the flat tax, global warming, and evolution. They don’t have to be grounded in truth, because the truth value doesn’t have any effect on my life. (…) To complain that someone’s views on global warming aren’t grounded in the fact, then, is to miss the point.

So one the hand, the truth value of many of our beliefs doesn’t really matter. But what, on the other hand, does matter is how the belief is perceived by members of the group on which we depend for access to resources, allied and mates. As Steven Pinker writes:

“People are embraced or condemned according to their beliefs, so one function of the mind may be to hold beliefs that bring the belief-holder the greatest number of allies, protectors, or disciples, rather than beliefs that are most likely to be true.”

As a consequence, the reasons we (say we) have these beliefs is social rather than functional. For even if some beliefs have no pragmatic utility, others can still see and react to them.

We can’t afford to be wrong about whether a bear is chasing us, but being wrong about climate change, to continue the example, will impose no personal cost on us at all, especially when compared to the cost of challenging the sacred beliefs of our group. So our incentives come entirely from the way other people judge us for what we believe. Not from the way act on them.

The rare exception would be someone living near the Florida coast, say, who moves inland to avoid predicted floods. (Does such a person even exist?) Or maybe the owner of a hedge fund or insurance company who places bets on the future evolution of the climate. But for the rest of us, there hardly are actions we can take whose payoffs (for us as individuals) depend on whether our beliefs about climate change are true or false.

By contrast, once a belief becomes important to the way we think about ourselves or important to the group we identify with, changing it becomes very costly. Breaking with the group carries the prospect of losing all manner of peer support. Which explains why false beliefs are often adaptive. They can provide us with psychological comfort, foster group loyalty and belonging, and serve a variety of other ends unrelated to truth.

Given that most beliefs are crony beliefs, it would seem that these social incentives often trump any benefit increased accuracy might bring. This means we often have little incentive to get at the truth.

From crony beliefs to identity-protective cognition

This is the plausible rationale behind Dan Kahan’s theory of “cultural” or “identity-protective” cognition.

Kahan, a law prof at Yale, reasons like this:

‘So forming beliefs contrary to the ones that prevail in one’s group risks estrangement from others on whom one depends for material and emotional support. That probably means all of us have acquired habits of mind that guide us to form and persist in beliefs that, against the backdrop of social norms, express our membership in and loyalty to a particular identity-defining affinity group. This implies that when a certain topic becomes polarized and we have to believe something – that there are (no) biological differences between the sexes, that gun laws (don’t) deter crime, that there’s (no) man-made climate change – as a requirement to keep in good standing with our tribe, identity-protective cognition takes over. This means there’s no point in conveying unwelcome facts and evidence. Because people will probably not use their brains to figure out which beliefs are best supported by the evidence. But to figure out how to best justify assessments of the evidence that fit with dominant position within their cultural group.’

That’s the basic idea.

As a consequence, on those topics, facts will increase rather than decrease polarization. Partisans polarize because they use the consistency of any evidence with their groups’ positions to determine whether that evidence should be given any weight at all.Studies, experts, and so on, that do not agree with this position X are dismissed on the grounds that, since they conclude not-X, they cannot be genuine experts and sound studies. Because we weigh the evidence so differently, we’re stuck in our opposing camps and don’t converge by considering the same information (like you and I might come to agree about the number of apples on the tree across my house by considering the results from a big apple-counting study I did this morning.)

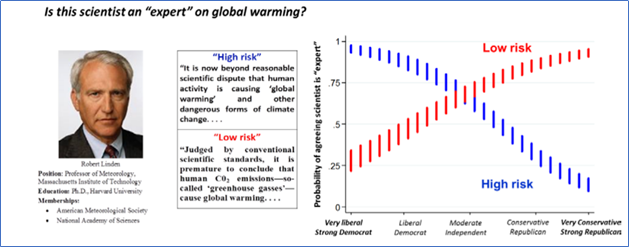

In support of this, Kahan et al discovered a strong correlation between people’s political identities and their evaluations of such information in a bunch of papers. Participants rate experts and studies highly when they favor their political worldview and poorly when they impugn them.

For example, in one study they asked people to indicate whether they regarded particular scientists on climate change as “experts”. The subjects’ assessments of the scientists’ expertise depended on the fit between the position attributed to the expert and the position held by most of the subjects’ cultural peers. If the featured scientist was depicted as endorsing the dominant position in a subject’s cultural group, the subject was highly likely to classify that scientist as an “expert” on that issue; if not, then not.

In a similar way, numerous studies have shown that people rate studies supporting their views as more valid, convincing, and well done than those opposing their views, even when all aspects of the studies are identical except for the direction of the findings.

The idea that such identity-protective cognition causes groups to drift further apart – and away from the truth – is popular these days. The New York Times, for example, has claimed that “Americans’ deep bias against the political party they oppose is so strong that it acts as a kind of partisan prism for facts, refracting a different reality to Republicans than to Democrats.” The common suggestion being that there’s no point in bothering with facts anyway because people will just dismiss them if they’re inconvenient.

What else, besides climate change for specific American groups?

Okay, so we’ve soon how, because espousing beliefs that are not congruent with the dominant sentiment in one’s group could threaten one’s position or status within the group, people may be motivated to protect their cultural identities. Which leads them to dismiss contrary evidence. Which, in turn, presumably stops them from updating their mind as the result of this evidence.

Still, this social incentive to endorse whichever position reinforces our connection to others with whom we share social ties doesn’t arise automatically. It requires that what you believe has a big enough effect on how you’re accepted your social circle. Only in those cases, it becomes perversely rational for people to affirm the validating beliefs of their social circle and reject contrary information come what may. And so only in those cases, exposing people to information about the scientific consensus might further attitude polarization by reinforcing people’s “cultural dispositions” to selectively attend to evidence.

But how frequent are those cases?

For one, the cultural cognition project has only studied American culture, which is considered as a massive outlier when it comes to polarization. So chances are the social incentives to engage in motivated reasoning are a lot less in other countries. For this reason, there is a good argument to be made that inferring the prevalence of motivated reasoning from American samples will overestimate its frequency.

Moreover, even in that culture, identity-protective cognition is activated by only a very small number of issues. As Kahan himself stresses:

“From the dangers of consuming artificially sweetened beverages to the safety of medical x-rays to the cardiogenic effect of exposure to power-line magnetic fields, the number of issues that do not polarize the public is orders of magnitude larger than the numbers that do.”

Cultural cognition is, as Cambridge psychologist Sander van der Linden concludes, “not a theory about culture or cognition per se, [but merely] a thesis that aims to explain why specific American groups with opposing political views disagree over a select number of contemporary science issues.”

Kahan’s work doesn’t support the popular notion that people are often not sensitive to evidence as a result of motivated reasoning so fact won’t change people’s minds anyway. For all we know, that might be the (rare) exception, not the rule.

Behind the numbers of motivated reasoning research

Even so, Kahan and his team have observed this pattern for a number of culturally divisive issues such as climate change, nuclear waste disposal and gun control. Even if it’s just America, the fact that the same pattern holds over multiple cases boosts its credibility, right?

In theory, yes. However, there are reasons to think that we cannot even conclude much about the frequency of motivated reasoning from those cases. To see those, we need to go into the design of studies on motivated reasoning.

This design first involves randomly assigning people to one of two (or three, if a control is included) treatments. Next, in each treatment, people receive some information. Across treatments, almost all characteristics of the information are held constant, save for the upshot of the information. Which is manipulated to be consistent only with either one type of outcome or another (e.g., an expert asserting that gun control laws reduce crime or do not reduce crime). Researchers measure peoples’ evaluations of the reliability of the information on self-report scales as the key dependent variable. They also take note of covariates, most obviously political identity. The critical inferential test is then conducted on the interaction between treatment (i.e., information) and covariate (e.g., political identity or some other preference for one outcome vs. another). If peoples’ evaluations of information reliability vary with their political identities, motivated reasoning is typically inferred.

The graph in figure 1, where people view highly credentialed scientist as an expert on climate change much less readily when the scientist takes the view opposed to rather than supportive of the one dominant in their political group, is an example of this.

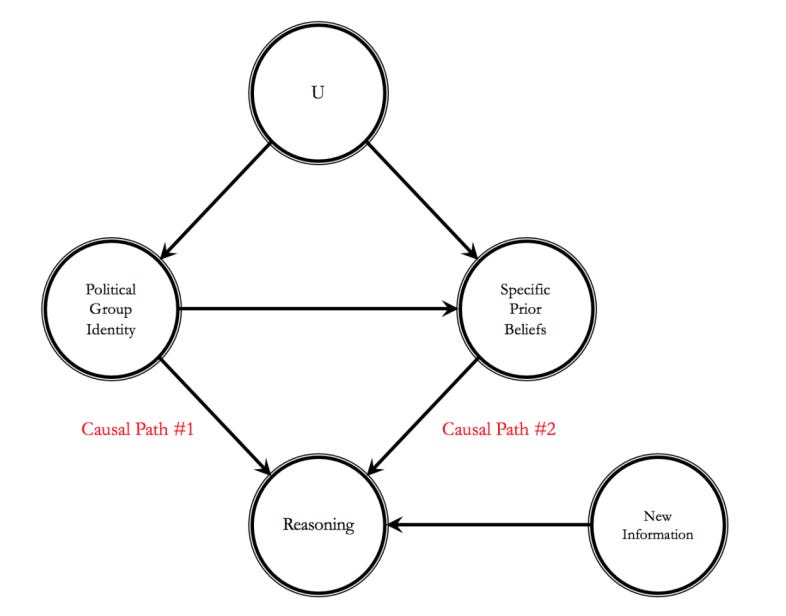

While such results are frequently regarded as evidence for identity-protective cognition, a shortcoming of these study designs is that people’s political identities and worldviews are often correlated with what information they have been exposed to and what they believe before they came into the lab. Consequently, in such designs, “switching” the information so that it impugns (versus favors) people’s political identities or worldviews simultaneously “switches” the information so that it is incoherent (versus coherent) with their issue-specific prior beliefs and experiences.

This introduces an ambiguity into the design. Because such coherence between new information and specific prior beliefs affects people’s reasoning about new information in general. Irrespective of identity-protection. If some message fits with your pre-existing beliefs, it’s rational for you to conclude it’s better information than when it does not. For example, if I were to see something now that looks like a crocodile, my preexisting belief that there are no crocodiles near here will rightly lead me to give less weight than I otherwise would to this visual information. That has nothing to do with cultural cognition.

So the observed differences in the evaluation of the information might be the result of participants having different prior beliefs which they use to check that information. Not because they’re protecting their identities.

Secondly and most crucially, in the foregoing designs, motivation is not randomly assigned. A direct corollary is that the results from these designs are indeed susceptible to the just outlined (confounding) explanation based on prior beliefs. Because the random assignment of information not only varies the consistency of said information with peoples’ preferences, political identities, and so on, but also with their prior beliefs. An “empirical catch-22 in motivated reasoning research.” This precludes the inference that motivation causes the observed patterns of information evaluation. Because the observed patterns might just as well have been causes by different prior beliefs.

In this light, then, the observed patterns of information evaluation may reduce to people being more receptive to evidence that confirms their prior beliefs. And, as I argued two weeks ago, confirmation bias in the interpretation of new information does not provide particularly convincing evidence of a violation of rational Bayesian inference.

But it might not even be that. Research by Jon Krosnick and Bo Mannis indicates that the growing division between Republicans and Democrats on the issue of global warming might be due to selective exposure to partisan media, rather than to such differential receptivity to conforming versus disconfirming information. A motivated reasoning account predicts that Republicans will be more open to being influenced by Fox News stories on global warming than might Democrats, and Democrats might be more open to being influenced by mainstream news stories on global warming than might Republicans. But their analyses indicates that a unit of time spend watching Fox news influences climate-related opinions of Republicans and Democrats in the same way. That is, it’s not the case that Republicans are more persuaded by Fox News than Democrats. Instead, it appears that Republicans and Democrats diverge in their opinions about global warming due to selective exposure to different news sources, where the dose of exposure rather than motivated reasoning regulates this influence.

You need to measure people’s actual opinions for establishing polarization

Another issue with the study design is that it measures how people evaluate the study or expert. Rather than how they actually (fail to) update their views in light of this information. Whereas the former seems more relevant in establishing the (ir)relevance of facts for getting people to change their mind on sensitive issues. And also in arguing that partisans actual opinions polarize in response to mixed evidence on sensitive issues as a result of them rating information differently. You need to ask about people’s actual opinion for that, not just what they think about some experiment or other.

Especially because – counterintuitively – the two outcome variables of (i) information evaluations versus (ii) posterior beliefs following exposure to the information can yield divergent results. For example, Ziva Kunda studied how heavy-coffee-drinking women evaluated a piece of research that linked heavy coffee drinking among women to a specific type of disease. The heavy-coffee-drinking women evaluated the research more negatively than women who drank little coffee, and men. This is conceptually akin to the biased-evaluations-of-information result described previously, but in an apolitical domain, and implies that the heavy-coffee-drinking women were resistant to the new and undesirable information. However, Kunda also measured subjects' posterior beliefs about their likelihood of contracting the disease. She found evidence of substantial belief updating towards the information on the part of the heavy-coffee-drinking women, suggesting they incorporated the information into their prior beliefs, despite their negative evaluations. This result was not discernible from the information evaluations alone, however, and illustrates that the two outcomes can privilege different interpretations.

Interestingly, it turns out that Kahan’s polarization-prediction does not materialize if you specifically examine people’s opinion shift after telling them about the scientific consensus (rather than examining what they think about some expert or study).

The simple effect of simple facts

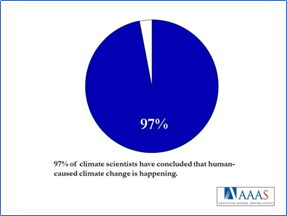

Exposing people to a simple cue about the scientific consensus on climate change typically leads to a significant change in public perception of the scientific consensus of around 15%. Highlighting the scientific consensus has also been found to neutralize polarizing worldviews in the case of vaccines and other issues.

At least, that is what experiments designed like this tell us. To measure the impact of communicating the scientific consensus, disguised between other questions to make the poll look like a regular public opinion poll, you ask people some questions about climate change and a bunch of other stuff like (from a 2015 paper by the aforementioned Sander van der Linden) what they think about Angelina Jolie’s double mastectomy and the National Transportation Safety Board’s recommendation to reduce the “drunk-driving” blood-alcohol level. Then after these questions, you distract them further by showing them an article about the 2014 Star Wars Animated Series. It is then you tell them that they will randomly be shown one of several messages which, unbeknownst to the participants, all contained the statement that “97% of climate scientists have concluded that human-caused climate change is happening.” After which you simply ask them some further questions about other stuff and again some about climate change, and compare their answers on the climate questions from before and after they had been shown the message.

Such studies typically find that this simple objective information about the scientific consensus can effectively change people’s beliefs about the consensus.

This might not say much, however, because participants might indicate higher percentages in round two because they don’t want to look stupid after having seen the 97% message. So it’s revealing that these experiments usually also find that a change in a respondent’s estimate of the scientific consensus significantly influences the belief that climate change is happening, human-caused, and the extent to which they worry about the issue and think we should do something about it. Belief in the scientific consensus functions as an initial “gateway” to changes in key beliefs about climate change, which in turn, influence support for public action.

So, despite its plausible backstory, the idea that facts only polarize people on sensitive topics is not correct, even if they judge that information somewhat differently. But that appraisal difference does not lead to polarization, and is not due to motivated reasoning but to different prior beliefs. Despite the popularity of lamenting that people are irrational motivated reasoning who inappropriately ignore inconvenient facts, the specific conditions under which motivated reasoning and belief polarization occur are much more constrained than often assumed.